When a user asks their device to play alternative rock, how much of the music their device plays is actually alternative rock?

Evan Paul, Pandora Radio’s product manager on the music recommendation team, explored this question today in the Austin Convention Center at SXSW. By introducing the challenges humans and machines often encounter when classifying music, he set the stage for the future of personalized music recommendations.

Paul started by reflecting on the history of music before the internet. He said music was previously defined entirely by humans.

“The music experience was either defined by the artist’s music or record label,” Paul said.

Now, Paul said people are given a broader range of choices in what they hear and the means by which they can hear it. However, this comes with the burden of machines doing the work for users.

“Machines and devices need to be able to respond to these free-form requests properly and accurately,” Paul said.

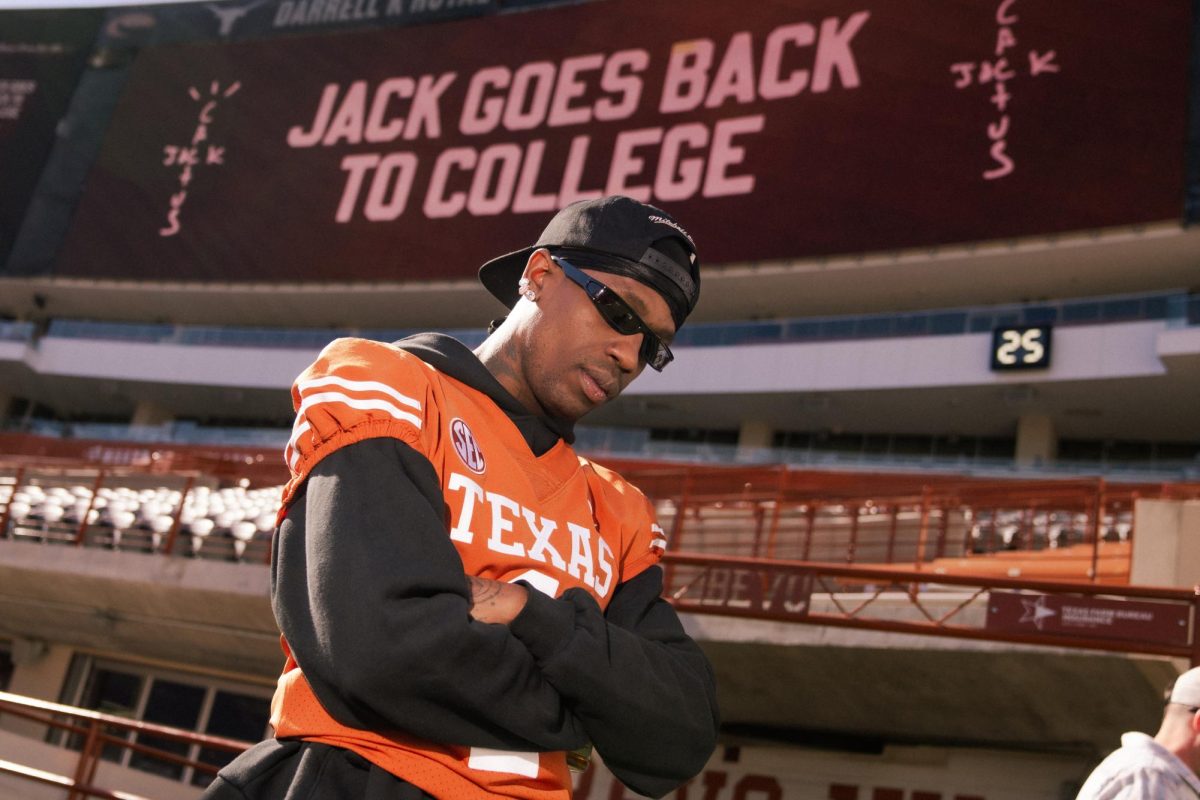

Paul illustrated this by explaining a test he did, asking three different voice services to play hip-hop. The machine responded by playing Kendrick Lamar’s “PRIDE.,” House of Pain’s “Jump Around” and Snoop Dogg’s “Gin and Juice.”

“Which was the best recommendation?” Paul said. “All three of these could objectively be hip-hop. It depends on who’s asking for it.”

For this reason, Paul said personalization in response to free-form requests is necessary. Thus, Pandora did a case study with their recent product launch last year. They developed and launched personalized soundtracks that were automatically generated based on a person’s affinity for a particular genre, mood or activity.

They found that within the soundtrack themed “happy,” Foster The People’s “Pumped Up Kicks” played. However, Paul said this isn’t a happy theme as the song describes a school shooter.

“To a machine, this song hit all the characteristics of a happy song,” Paul said. “But to a discerning listener’s perspective, this song is not happy at all.”

Thus, the team was able to come to a conclusion that machines need humans to understand the listener’s expectations.

“We need to bring humans back into the equation,” Paul said. “The algorithms today do not accurately capture the nuances of culture and human experiences.”

Additionally, they also concluded that the general listeners are not always right. After the machine identified Disclosure’s “White Noise” as a “deep house” genre, handfuls of people were either pleased or disagreed with the classification.

“Clearly, there’s a dissonance here with the mainstream definition of genre and technical definition,” Paul said.

This brought the team to their next conclusion that the singular listener is always right, because of the true purpose behind recommendations.

“Music recommendations are all about pleasing the listeners that ask for them,” Paul said.

Paul concluded with the idea that personalization is vital, and streaming services should provide this for their listeners because music recommendation should be a joint effort between humans and machines.

“Music classification is really hard, not just for machines but for people as well,” Paul said. “How can we possibly expect machines to classify music correctly if humans have difficulty with it?”