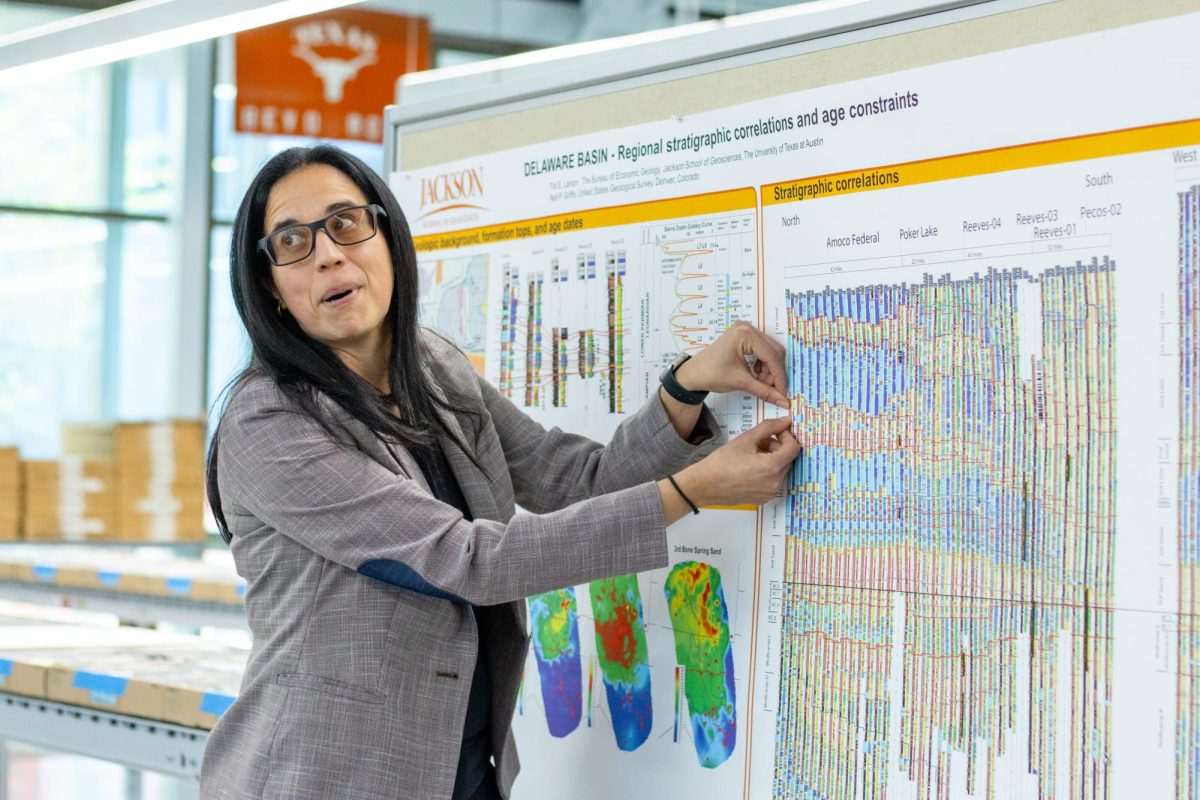

An associate linguistics professor discussed her preliminary research on medical text summarization that laid the groundwork for the $1.3 million grant she and her team received to continue her research on Oct. 30 during the Language, Mind and Ethics in AI event.

Jessy Li’s grant project which she worked on with Professors Byron Wallace and Wei Xu and was funded by the National Institutes of Health, will look into improving the accuracy and readability of large language model’s plain text summaries of medical documents. It proposes various techniques to improve these model’s ability to detect errors in their responses and alert readers when a mistake has been made.

“Hopefully, we will develop some tool such that when people are using (these models) LLMs to help them understand medical texts, they will be able to see where the model may likely be making an error or the model is not very confident,” Li said.

Large language models are artificial intelligence tools trained on large sets of data that can recognize and generate human language text. According to Li, models like ChatGPT can turn abstract medical research into easy-to-understand information for the general public and are already being used for this purpose. However, due to the nature of these tools and their inability to identify when they’ve made a mistake, the information generated can often be misleading.

The preliminary research, led by computer science doctoral student Sebastian Joseph, assessed the model’s summaries when simplifying medical documents and found the models struggle to balance factuality and clarity. During the event, Li outlined the inaccuracies the research found in the model’s responses, which included AI hallucinations, or the phenomenon referring to factual errors ranging from minor inconsistencies to major misrepresentations, and generating potentially harmful conclusions.

“There’s an inherent trade-off with being precise and also explaining things in plain language,” Li said. “There are certain elements of a trial that you cannot omit and (that) need to be conveyed.”

Both the institutes and Michael Mackert, director of Dell Medical School’s Center for Health Communication, believe large language models offer an opportunity for public access to newly produced medical research, but further work must be done to mitigate the risk of misinformation.

Mackert is working with Li on the grant project, which is currently in its formative phase.

“One of the upsides of this kind of research is it can shorten the window from complicated health research that is being published in medical journals into a format for patients who are doing their own research and want to advocate for themselves as patients,” Mackert said. “It can, with the right guardrails and the right technology in place, democratize a lot of health information.”

Editor’s note: A previous version of this story misstated the amount of the grant and did not list all contributors to the project. This has been corrected. The Texan regrets this error.