Supercomputers can perform thousands of trillions of complex operations per second, but maintaining them is half the battle.

Last Wednesday, Tim Osborne, UT alumnus and systems analyst and geophysical programmer for ConocoPhillips, gave a talk at the Gates Dell Complex about the benefits and challenges associated with high performance computing, or HPC.

HPC runs complex problems across multiple computers at the same time and can be achieved through supercomputers, which have specialized hardware or clusters, which are regular computers aggregated together.

In ConocoPhillips’ case, the company uses a cluster of 5,136 computers, known as nodes, all stacked into a room 60 feet by 112 feet. The trade-off for this type of processing power, though, is the increased use of electricity.

“Our power bill used to be over $100,000 a month,” Osborne said. “To put what we use now in perspective, if you took enough power to run 1,000 residential homes in Houston for a month, we use that in an hour.”

Osborne said the cost is necessary for the company, though. ConocoPhillips uses HPC for two main purposes: seismic processing, which is based on geophysics, and oil reservoir engineering.

On the seismic side, the cluster is used to create detailed images of rock layers which help ConocoPhillips determine where to find oil. The company will send a truck that generates seismic waves to a specific site, then record how the waves return to the truck from the ground. The cluster analyzes the signal strength and time it took to receive the signals to create its images.

For reservoir engineering, the cluster helps maximize production by simulating how changes in variables such as pressure and heat affect the oil reservoir. Each calculation can take several minutes to run, and the cluster performs them thousands of times.

Osborne, as the lead for the HPC Operations Team, works to improve runtimes for the cluster, a task he said can be frustrating at times.

“HPC problems are extremely complex,” Osborne said. “If a user says their job is running slowly, there’s a lot of communication involved to make all of this work. It could be storage, the computer node, a network problem — we’ve seen all of it. Once, we determined nodes at the bottom of a rack were performing worse because air was blowing past them too fast from vents on the floor."

Despite the difficulty, Osborne says the job is rewarding.

“I’ve been there five years, and I haven’t stopped learning at all, and that’s not because I’m stupid,” Osborne said. “It’s satisfying to Google something and be incapable of finding the answer — and then solving it.”

UT computer science senior Austin Hounsel, who attended the talk, said afterwards he was intrigued by the real-world applications of HPC.

“I thought it was really interesting,” Hounsel said. “To see what people actually do in industry was eye-opening since I’ve only been exposed to what we’ve learned in class. [In class], we talk about how concepts are applied but don’t actually get to see the real use. Here, I could see how it benefited a company.”

HPC affects a wide variety of other fields, including health, aerospace engineering and storm prediction. Due to its ability to solve problems requiring simulation or using more data than a human can study, the technology is valuable in almost any computational field.

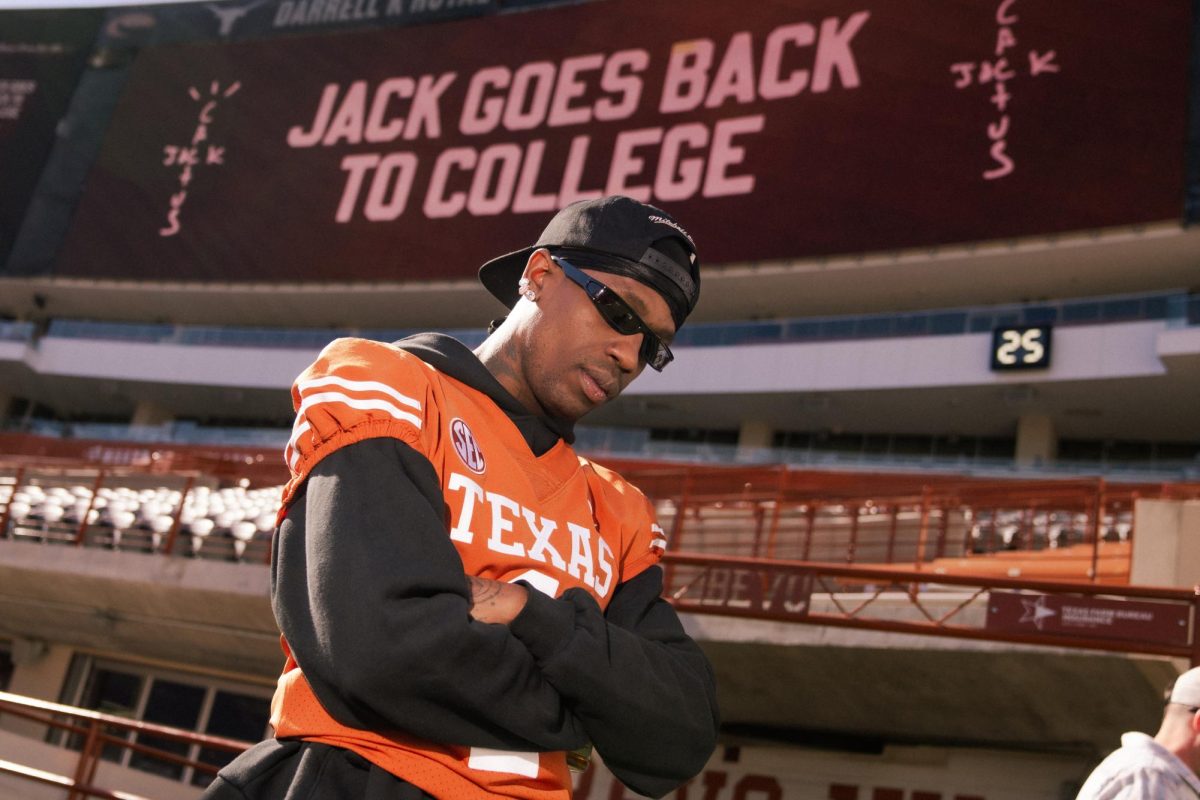

At the Texas Advanced Computing Center, UT’s Stampede is ranked as the world’s 12th top supercomputer and provides students with the opportunity to experience HPC.

“We have users in every college on campus at this point — folks in humanities, economics, just projects that require lots of computation,” TACC executive director Dan Stanzione said. “From a data analysis component, if you want to know how the universe works, you pretty much need HPC.”

In June, TACC announced Stampede 2, a new supercomputer Stanzione says will be in the top ten supercomputers worldwide and the largest of any US university. Stampede 2 will begin replacing the current version in January, during a time of large-scale pressure to reach peak performance.

“There’s a huge competition right now on who will be leading the world,” Stanzione said. “HPC aids you in manufacturing, designing products — arguably, leadership in HPC is essential for economic growth and national security, and there have been significant pushes, particularly in China and India.”

The current goal, Stanzione says, is to reach exaflop-level performance. Currently, the top supercomputers of the world operate on a petaflop scale, meaning they can perform one quadrillion floating point operations per second. At the exaflop scale, this rises to one quintillion.

“We’re slowly running out of steam in the traditional way we make computers faster,” Stanzione said. “We need to be better at software and systems. We just have to look at how we build devices fundamentally.”