About 70 students took a study break to discuss the future of artificial intelligence Wednesday evening.

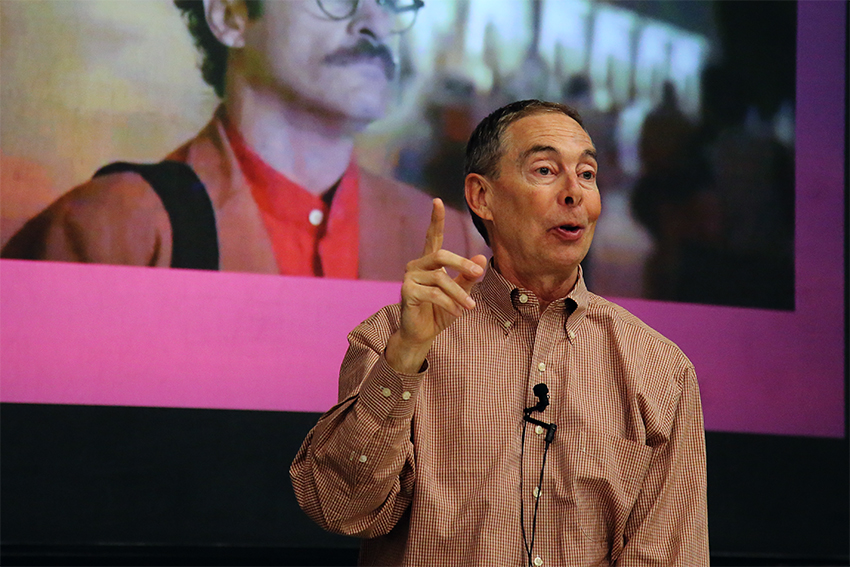

Bruce Porter, computer science department chair and professor, primarily researches artificial intelligence. He engaged students with topics such as free will in robots and videos of human and robot interactions. The talk was part of the University of Texas Libraries speaker series, “Science Study Break.”

“[Artificial intelligence] is an army of disposable data,” Porter said. “But when does it cross the line?”

Porter recalled a time when he was biking on the 51st Street when a car almost hit him in the bike lane. He said the car turned out to be an autonomous vehicle. Porter then figured out that Google scientists determine a parameter — in this case, the minimal distance between a vehicle to a biker.

“In [the case of accidents in] autonomous vehicles, we force the robots to [choose between hitting] the cyclist on the right, or four children on the left,” Porter said. “Does it require emotions, or

free will?”

Porter discussed the three laws of robotics created by science fiction author Isaac Asimov. The first law is that a robot should not hurt a human or, by failing to take an action, let a human gets hurt. A robot should follow a human’s orders except in situations that conflict with the first law; also, a robot should protect itself except in situations where its own protection conflicts with the first or the second laws.

Computer science senior Alexandra Gibner, who was at the event, said when the UT robot soccer team was teaching robotic dogs how to walk, the team did not tell the dogs exactly how to step forward. The dogs independently figured out that when they shuffed on their knees, they became more stable and efficient. This surprised the team, she said.

Plant biology senior Katia Hougaard, who was also at the event, said when an AI starts having desires independent from its programmer — which Porter cited as free will — it quickly became possible that the AI would destroy its programmer.

“I think what might happen if we gave robots the ability to desire, is that we would end up with a class of robot criminals,” Hougaard said. “I’m not saying that all independent desires are bad, but desire by its very nature is selfish. I think somehow building a moral code into an artificial intelligence would be the way around this.”

Porter said concern about robot free will not become a pressing issue until 15-20 years from now, but cautioned that it will be difficult to predict. He said AI scientists certainly had not foretold that a computer would eventually be able to play Go, a board game similar to chess, yet Google’s DeepMind computer program recently beat the human Go champion, Lee Sedol.

“[Elon Musk and Stephen Hawking’s] concern is that if an AI is able to learn [how] to read and [how] to learn, it could quickly outpace any human in its intelligence and problem solving abilities, and then we become obsolete,” Porter said. “That’s what they’re predicting — I’m not.”

While there are safety regulations on other technologies, such as cloning, Porter said there are currently no regulations when it comes to engineering AI.

“Hal computer in 2001 — a fictional character in Arthur Clarke’s Odyssey series — didn’t have free will, but followed its own logic and decided that humans must die,” Porter said. “As an engineer of AI system, I don’t know where the line is. I need better, concrete guidelines where to draw that line.”